Punching Above Its Weight: Meet Qwen 3.5 and the God-Tier 9B Architecture Everyone's Talking About

Disclaimer: This post is machine-translated from the original Chinese article: https://ai-coding.wiselychen.com/qwen-3-5-9b-small-model-god-tier-architecture/

The original work is written in Chinese; the English version is translated by AI.

📝 Originally written in Chinese. Read the original →

Author: Wisely Chen Date: March 2, 2026 Series: AI Architecture Keywords: Qwen 3.5, 9B, Small Language Model, Dense Architecture, Hybrid Attention, Gated Delta Network, Local LLM, Open Source

Table of Contents

- Why I’m Writing This

- What Is Qwen 3.5? A 30-Second Overview

- The 9B Scorecard: Let the Numbers Talk

- Breaking Down the Magic #1: Hybrid Attention

- Breaking Down the Magic #2: Native Multimodal “Early Fusion”

- Breaking Down the Magic #3: RL Pushed Down to Small Models

- The Key Decision: Why Does 9B Stick With Dense Instead of MoE?

- What This Actually Means for Enterprise Local Deployment

- Honestly

Why I’m Writing This

On March 2, 2026, Alibaba’s Qwen team dropped the final wave of the Qwen 3.5 series—the Small series, including four sizes: 0.8B, 2B, 4B, and 9B.

And my tech chat groups exploded.

What everyone was talking about wasn’t the 397B flagship. It was the “just 9B parameters” small model. The reason was simple: it beat the previous-generation Qwen3-80B (9x its size) on multiple benchmarks, and even punched above its weight against OpenAI’s GPT-OSS-120B (13.5x its size).

VentureBeat’s headline put it bluntly: “Alibaba’s small, open source Qwen3.5-9B beats OpenAI’s GPT-OSS-120B and can run on standard laptops.”

I’ve written before about enterprise on-premise LLM system architecture, and in my piece on running local LLMs against Excel, I actually ran Gemma 3’s small model. So when it comes to “what can small models really do,” I’ve got some hands-on experience.

Today, let’s break down what Qwen 3.5-9B actually did on the technical side to punch this far above its weight.

What Is Qwen 3.5? A 30-Second Overview

Qwen 3.5 is the open-source model series that Alibaba’s Qwen team released in three waves in February–March 2026, all under Apache 2.0.

The three-wave timeline:

| Date | Wave | Models |

|---|---|---|

| 2/16 | Flagship | 397B-A17B (MoE, 512 experts) |

| 2/24 | Mid-size | 27B, 35B-A3B, 122B-A10B |

| 3/2 | Small | 0.8B, 2B, 4B, 9B |

Three core selling points: native multimodal, hybrid attention architecture, and purpose-built for Agentic AI.

And 9B is the breakout star of this small model lineup.

The 9B Scorecard: Let the Numbers Talk

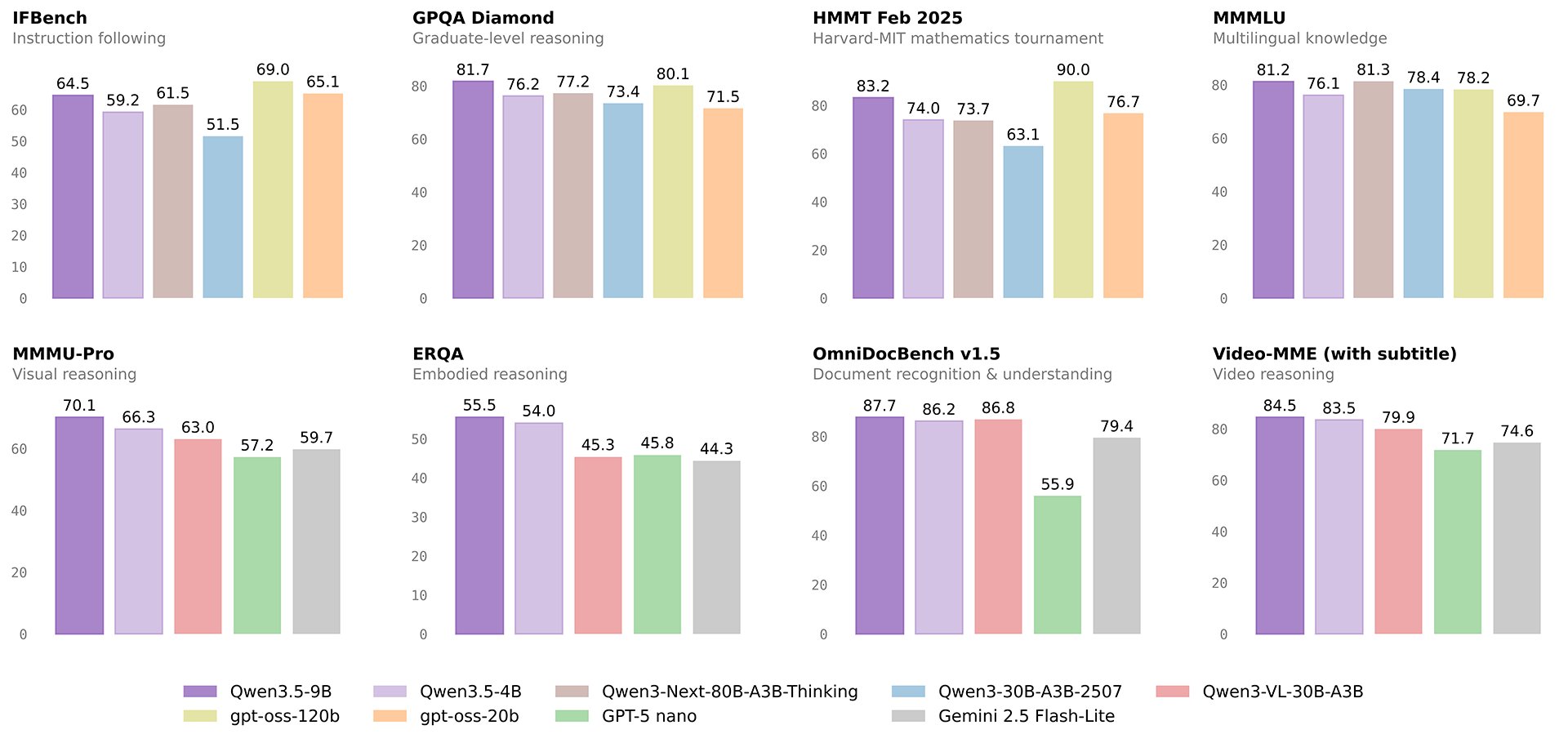

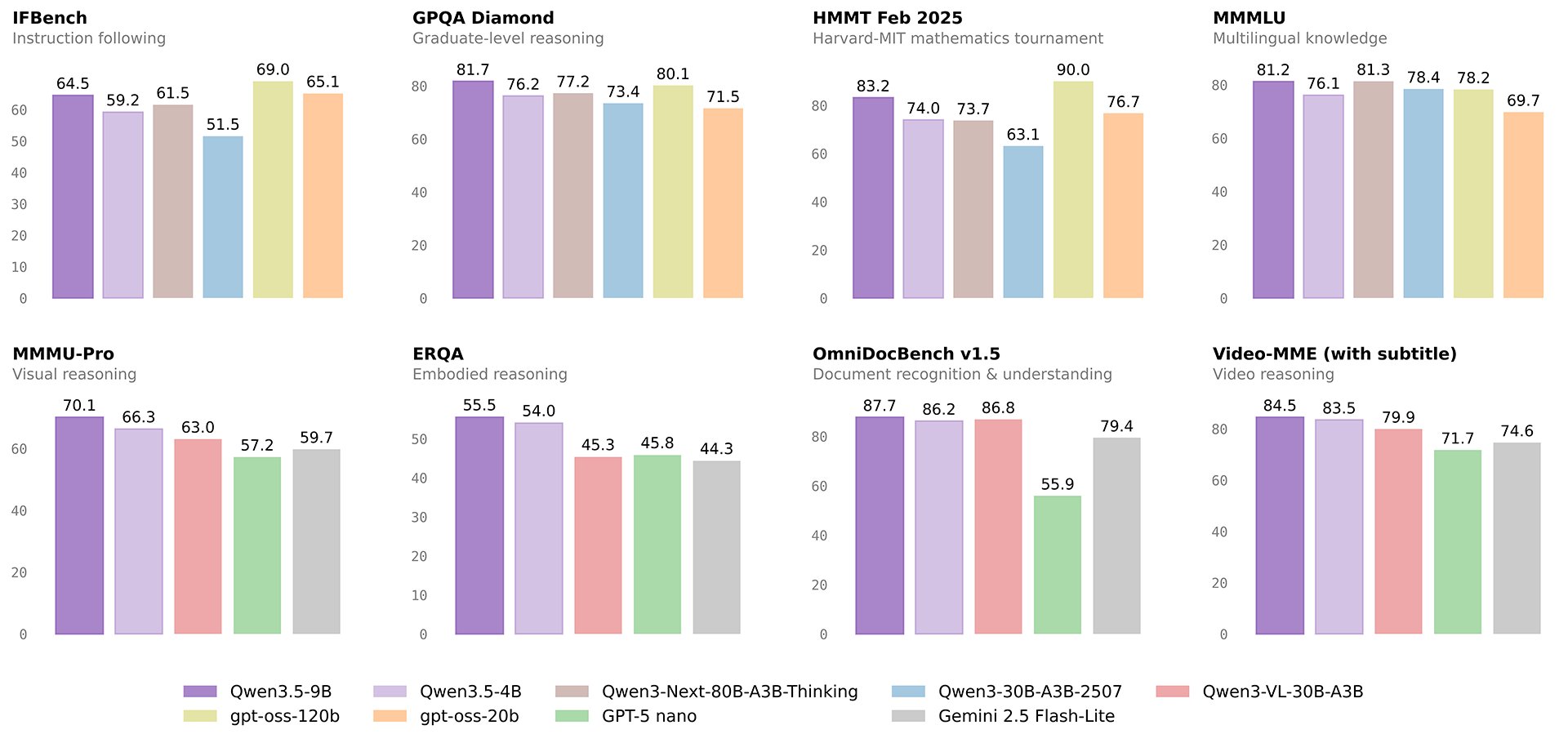

Hard data first, no emotional coloring:

vs. Last-Gen Qwen3-80B (9x Bigger)

| Benchmark | Qwen3.5-9B | Qwen3-80B | Result |

|---|---|---|---|

| GPQA Diamond | 81.7 | 77.2 | 9B wins |

| IFEval (instruction following) | 91.5 | 88.9 | 9B wins |

| LongBench v2 (long context) | 55.2 | 48.0 | 9B wins |

| MMLU-Pro | ~82.5 | ~82.5 | Tie |

vs. OpenAI GPT-OSS-120B (13.5x Bigger)

| Benchmark | Qwen3.5-9B | GPT-OSS-120B | Result |

|---|---|---|---|

| GPQA Diamond | 81.7 | 71.5 | 9B wins |

| HMMT Feb 2025 (math) | 83.2 | 76.7 | 9B wins |

| MMMU-Pro (multimodal understanding) | 70.1 | 59.7 | 9B wins |

| MMLU-Pro | 82.5 | 80.8 | 9B wins |

| MMMLU (multilingual) | 81.2 | 78.2 | 9B wins |

These aren’t “slight leads”—on MMMU-Pro it’s 70.1 vs. 59.7, a gap of more than 10 points. Using a 9B model to beat a 120B model is something that would have been unthinkable two years ago.

So the question is: how did they pull it off?

Breaking Down the Magic #1: Hybrid Attention

This is the most important architectural innovation across the entire Qwen 3.5 series, called the Gated Delta Network.

Traditional Transformers use “full attention”—every token has to compute against every other token. It’s precise, but the cost is that memory consumption grows quadratically with sequence length. Simply put: the longer the text, the more memory it eats, the slower it gets.

Qwen 3.5’s approach: don’t use full attention everywhere. Switch to a 3:1 hybrid ratio—three layers of linear attention for every one layer of standard attention.

The principle behind linear attention is to compress input into a fixed-size state, dropping complexity from O(n²) down to nearly O(n). The benefits are very concrete:

- Native 262K tokens context window (about 200,000 Chinese characters)

- Scalable up to 1 million tokens

- Massively reduced KV-cache memory consumption

Translated into plain language: you can throw an entire book in and have it analyzed without worrying about OOM (running out of memory).

This isn’t some paper concept sitting in a lab. This is production-grade architecture already deployed across every model from 0.8B to 397B.

Breaking Down the Magic #2: Native Multimodal “Early Fusion”

Small models in the past usually followed a “learn text first, then bolt on vision” pattern. You’d train a pure text model first, then use an adapter or bridge to hook up an image encoder afterward.

Qwen 3.5’s approach is different: from the earliest stage of training, visual and text data are trained together jointly.

This “raised from the womb looking at pictures while learning to read” approach shows up in the numbers:

- Video-MME (with subtitles): 84.5—a 9B model that can understand video content

- OmniDocBench v1.5: 87.7—document understanding that far exceeds GPT-OSS-120B

- Even the smallest 0.8B model can handle video—the first sub-1B model in history that can do this

Why does “early fusion” matter so much? Because it lets the model establish the correspondence between vision and language at the lowest level, instead of wiring them together after the fact. Like a kid who grew up bilingual versus someone who picked up a second language as an adult—the fluency of thought is completely different.

Breaking Down the Magic #3: RL Pushed Down to Small Models

This is the most easily overlooked breakthrough, but probably the one with the biggest impact.

In the past, reinforcement learning (RL) was mostly the privilege of big models. The reason was simple: RL needs the model to have enough “baseline capability” to learn from feedback. You can’t teach a model that can’t even do basic arithmetic how to solve calculus.

But the Qwen team did something: they took million-scale Agent environment RL techniques and applied them directly to a 9B small model.

The result: 9B gained powerful “autonomous task decomposition” capability. Facing complex math or coding problems, it’s no longer just “predicting the next token”—it can reason through logic step by step, doing multi-step reasoning like a human.

This shows up in the HMMT Feb 2025 math test: 9B scored 83.2, beating GPT-OSS-120B’s 76.7. A 9B model beating a 120B model on math reasoning—that’s the power of RL.

The Key Decision: Why Does 9B Stick With Dense Instead of MoE?

This design decision deserves its own section, because it’s deeply counter-intuitive.

Across the Qwen 3.5 family, the larger models (35B, 122B, 397B) mostly adopt MoE (Mixture of Experts) architecture to save compute—for example, the 397B actually has 512 experts but only activates 10 at a time, so active parameters are just 17B.

But 9B goes the other way, adopting pure Dense architecture.

What does that mean? Every single computation, all 9 billion parameters show up for work. No “expert rotation,” no “partial rest.”

Why?

Extreme Compression of Knowledge Density

9 billion parameters take up about 18GB of VRAM in BF16, and only around 5GB after 4-bit quantization. That footprint is light for modern hardware.

Going Dense means: every parameter contributes to every inference. No waste.

Fewer Hallucinations, Stronger Logic

This is the more important reason. The essence of MoE architecture is “let different experts handle different problems.” It’s efficient, but there’s a side effect: when the routing mechanism misjudges and assigns a problem to the “wrong expert,” hallucinations become more likely.

Dense models don’t have this problem. All parameters participate in every inference—like having an entire team sitting together to discuss, instead of only sending one or two people to the meeting.

When handling complex math and deep logical reasoning, Dense models typically perform more solidly and hallucinate less than MoE models with equivalent active parameters.

This is why 9B Dense scores 83.2 on HMMT math.

What This Actually Means for Enterprise Local Deployment

OK, enough architecture talk. Back to the question I actually care about: what does this mean for enterprises wanting to deploy AI on-premise?

In my earlier post on enterprise on-premise LLM architecture, I made the point that the core reason enterprises choose local deployment usually isn’t performance—it’s data sovereignty and trust.

Qwen 3.5-9B changes the cost-performance equation for “local deployment” entirely:

The Hardware Bar Drops Drastically

| Precision | VRAM Required | Hardware |

|---|---|---|

| BF16 (full precision) | ~18GB | RTX 3090 / RTX 4090 |

| 4-bit quantization | ~5GB | RTX 3060 12GB / M1 Mac |

A model that runs on a single RTX 3060 with near-80B-last-gen capability. What does that mean? It means you don’t need an A100 server—a regular workstation or even a laptop can do this.

Three Ways to Run It, Three Budget Tiers

1. Lossless full-fidelity (FP16 precision)

If you want to experience the full 100% 9B model with no compression, the model itself takes about 18GB of VRAM, plus buffer for compute—you typically need 20GB to 24GB of VRAM.

- Windows / Linux PC: You need a high-end GPU, like an NVIDIA RTX 3090, RTX 4090, or the upcoming high-end 50 series cards.

- Mac: A MacBook or Mac Studio with Apple Silicon (M1/M2/M3/M4 Pro/Max/Ultra chips) with 32GB+ unified memory.

2. High value-for-money smooth run (INT8 light quantization)

This is what most people pick. Compressed to 8-bit, the model barely loses any intelligence, but only needs about 10GB to 12GB of VRAM to run smoothly, with very fast generation speed.

- Windows / Linux PC: Mid-range GPUs like NVIDIA RTX 3060 (12GB version), RTX 4070, or 4070 Super handle it easily.

- Mac: A Mac with 16GB unified memory (even the base M-series chip is fine).

3. Lowest-barrier extreme mode (INT4 deep quantization)

If your computer is older, or you’re on a regular gaming laptop, you can go with the 4-bit quantization version (like GGUF format). This version sacrifices a little complex reasoning ability, but VRAM requirement drops to just 6GB to 8GB.

- Windows / Linux PC: NVIDIA RTX 2060, 3050, 4060, or even many gaming laptops’ discrete GPUs can run it.

- Pure CPU run: If you don’t have a discrete GPU at all, as long as your computer has 16GB+ system RAM, you can run it purely on CPU—it’ll just be slower (roughly human typing speed).

Ready to Go on Every Platform

| Platform | Status |

|---|---|

| Hugging Face | Live |

| Ollama | ollama run qwen3.5:9b |

| vLLM / SGLang | Officially recommended |

| llama.cpp | Supported |

| MLX (Apple Silicon) | Supported |

Apache 2.0 license means no commercial restrictions. Do whatever you want with it.

262K Context = Enterprise-Grade Application Threshold

A native 262K tokens context window is roughly equivalent to 200,000 Chinese characters. Which means:

- You can drop an entire legal contract in for analysis

- You can feed in tens of thousands of lines of code at once

- You can do cross-document knowledge integration

And this is “native” support, not stitched together with RAG plugins.

Honestly

Writing to this point, I have to be honest about a few things.

1. Benchmarks ≠ Real-World Use

CNBC stated it plainly in their reporting: “could not independently verify Alibaba’s benchmark claims.”

Benchmarks are run by the vendors themselves. Evaluation methodology, prompt format, temperature settings, few-shot count—all of these affect the score. Different sources reported different scores for the same comparison target (like GPT-OSS-120B), which tells you something on its own.

So those “9B beats 120B” headlines? Take them with a grain of salt, don’t swallow them whole.

2. I Got It Running, But Haven’t Retested My Actual Scenarios

I already pulled 9B down with Ollama and ran it—basic dialogue and reasoning are indeed smooth. But the scenarios I ran in my local LLM Excel piece with Gemma 3—Excel parsing, structured data extraction, multi-step reasoning—I haven’t re-run those with Qwen 3.5-9B yet.

So the architectural analysis in this post is based on officially published technical information and benchmark data. Real-scenario comparative testing will come in a separate post once I’m done.

3. The “God-Tier Small Model” Label Needs Time to Verify

The community is excited right now, which is normal. But history tells us that lots of models that look strong on benchmarks expose problems in actual use—Chinese understanding might be off, long-context reasoning might break down, tool calling in Agent scenarios might be unstable.

Real “god-tier” status needs time and heavy real-world use from many users to validate—it doesn’t get crowned by a benchmark table.

4. What Open Source Ecosystem Actually Means

That said, even if you discount the claims, Qwen 3.5-9B’s arrival is still significant. It proves one thing:

“Good architecture + high-quality data + excellent reinforcement learning” matters far more than just making the model bigger.

Hybrid attention (Gated Delta Network), native multimodal early fusion, RL pushed down to small models—these three breakthroughs cram capabilities that used to belong only to big models into something that runs on a single RTX 3060.

For independent developers, academic researchers, or enterprises that want to deploy AI applications on-premise, this is one of the best cost-performance options currently available in the open-source community.

Is it really “god-tier”? Run it and see.

Further Reading

- Enterprise On-Premise LLM System Architecture Blueprint — Why enterprises want local deployment, and how to build it

- The Right Way to Run Local LLMs Against Excel — Hands-on Excel parsing with Gemma 3

- 2026 Lunar New Year: China’s Open-Source Models Exploded All at Once — How to pick between Kimi, Qwen, GLM, and MiniMax

- How to Pick AI Models in 2026: A Practical Guide Across 7 Scenarios × 9 Models — Model selection advice for different scenarios

- Can Local Models Really Do Tool Calling? The Brutal Truth from ToolCall-15 — 9B tool calling is the ceiling; 27B dense is the sweet spot

- Burning LLMs Directly Into Silicon: Taalas’ Crazy Bet — If inference cost goes to zero, the rules for local small models change

- Qwen 3.5 Official Blog — Official technical report

- VentureBeat: Qwen3.5-9B beats GPT-OSS-120B — External coverage

One-sentence takeaway: 9B parameters beat 120B not through black magic, but through three solid architectural innovations—hybrid attention, early fusion, and RL pushed down. But benchmarks aren’t reality. Run it and see.